Is Your Data Centre Facility Ready for the AI Revolution?

The conversation around Artificial Intelligence (AI) has shifted from “if” to “how.” As the recent Vertiv white paper, Transforming Colocation Facilities to Meet Demand for AI Compute, highlights, business leaders are overwhelmingly optimistic about AI. However, the path to scaling AI pilots into full-scale production is hitting a familiar bottleneck: legacy infrastructure.

For colocation providers and enterprises in Australia, the challenge is no longer just about finding space. It’s about delivering the unprecedented power, cooling, and scalability that AI workloads demand. At Ecanet Engineers as a trusted Vertiv partner, we specialise in bridging the gap between this ambitious vision and practical, reliable execution.

Here is how we are helping Australian data centers navigate the three core pillars of AI readiness: power, cooling, and scalability.

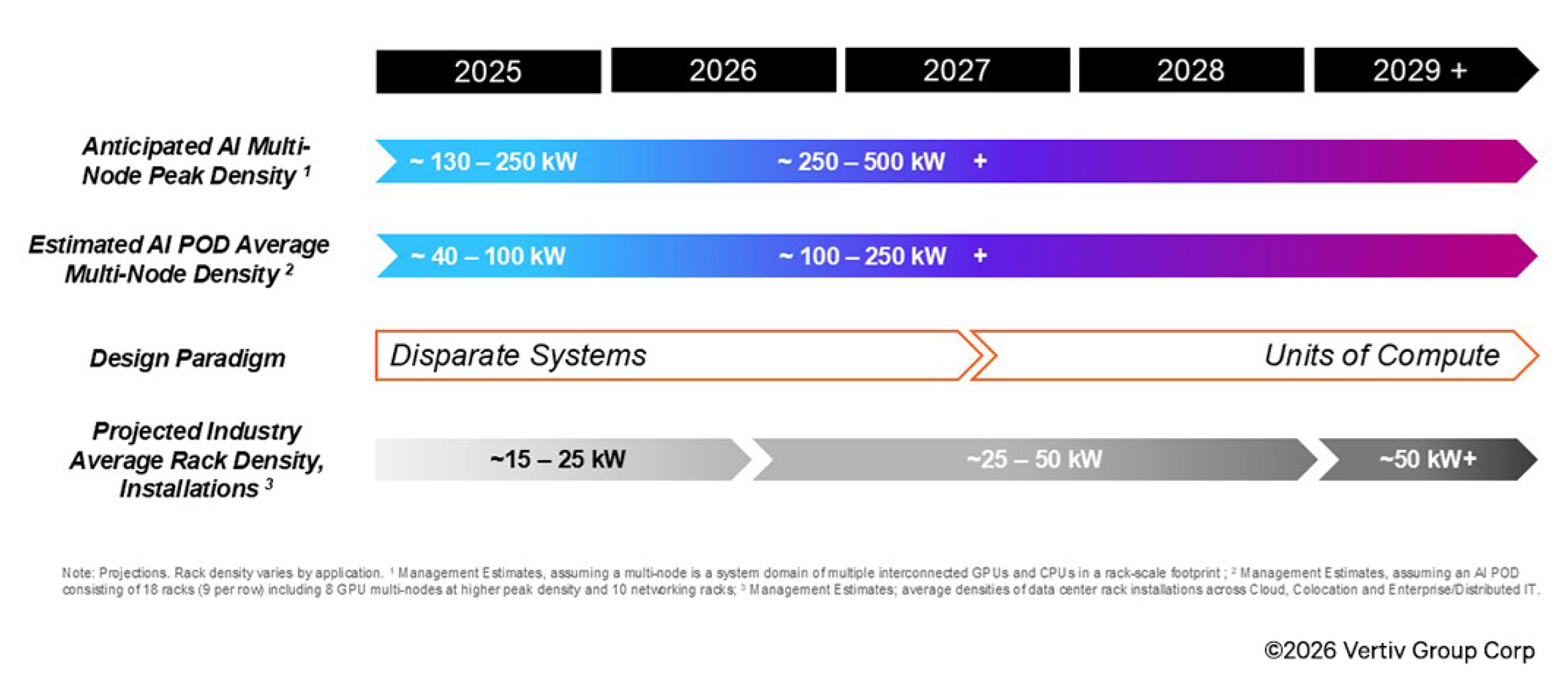

The density of AI deployments is projected to double in the coming years.

1.Power: Moving Beyond “Always On” to “AI-Ready”

Traditional IT loads are predictable. AI workloads, driven by GPUs, are anything but. They create rapid load fluctuations that can destabilise legacy power systems.

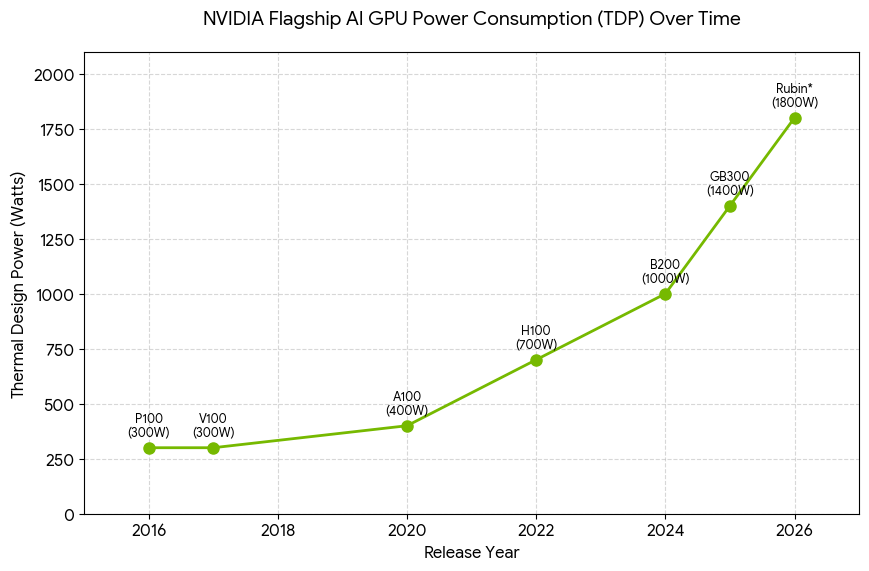

The scale of the challenge is best illustrated by the relentless increase in GPU power draw. In 2016, the NVIDIA P100 consumed around 300W per GPU. By 2022, the H100 had reached 700W. Today, the GB300 pushes 1400W, and next-generation Rubin architectures are expected to approach 1800W. When dozens or hundreds of these GPUs are clustered in a single rack, total rack densities soar from 40–100 kW to well over 250 kW in the near future.

Nvidia GPU TDP (Thermal Design Power)

In 2016, NVIDIA P100 consumed 300W per GPU. By 2022, the H100 had reached 700W. Today, the GB300 pushes 1400W, and next-generation Rubin architectures are expected to approach 1800W.

According to the Vertiv white paper, AI training cycles can cause power draws to swing from 30% to 100% in milliseconds. To maintain uptime (a non-negotiable for your clients), power systems must be engineered for this variability.

Modern approaches include integrating Battery Energy Storage Systems (BESS) with UPS platforms to enable peak shaving and grid stabilisation, protecting facilities from growing grid instability. High-density distribution methods - such as overhead busway systems - allow flexible, high-capacity power delivery without costly re-cabling or downtime. Additionally, advanced AI load simulators can validate power chains against real AI workload profiles, ensuring systems handle variability without false alarms or unnecessary battery drain.

2. Cooling: Managing the Heat of High-Density Pods

The white paper makes it clear: air cooling has reached its physical limits for AI. With GPUs generating massive heat, liquid cooling is no longer a “nice to have” - it is a requirement for performance.

However, a full rip-and-replace of cooling infrastructure isn’t always feasible, nor is it necessary.

A hybrid, engineering-driven approach to thermal management is now the industry standard. Direct-to-chip liquid cooling can handle over 70% of the heat at the source (the GPUs), while existing air-cooling manages the residual load. Solutions that allow smooth transitions from air to liquid cooling protect upfront capital investment. Strategic placement of Coolant Distribution Units (CDUs) - whether in-rack, in-row, or in a mechanical gallery - minimises fluid routing complexity and maximises efficiency. Rigorous commissioning, comprehensive leak detection networks, and lifecycle maintenance plans (including fluid quality testing and system flushing) ensure long-term reliability.

3. Scalability: Speed to Market with Prefabrication

The race to capture AI market share means you cannot afford 18-month build cycles. You need speed and predictability.

Preconfigured, factory-integrated solutions can cut deployment times by up to 50%. Using AI-focused reference designs (co-developed with leading chipmakers), facilities can deploy proven templates for rack densities ranging from 10kW to over 140kW. For greenfield deployments or major expansions, prefabricated modular data centers arrive on-site fully integrated with direct-to-chip liquid cooling, close-coupled UPS/switchgear, and power distribution. This approach offers a compact footprint, accelerated build times, and predictable costs. Scalable architecture allows for phased growth, deploying modular blocks of power and cooling that scale precisely with customer demand.

Partnering for the Future

The Vertiv white paper confirms that AI workloads are moving to colocation environments. For Australian providers, this is a massive opportunity. But success requires moving away from traditional data center management and toward a specialised approach that manages power variability, high-density heat, and rapid scalability.

At Ecanet, we bring the expertise of a Vertiv partner to your local market. We deliver end-to-end engineering solutions—from concept to commissioning—that make AI-ready infrastructure a reality.

Our Services: Built for AI-Ready Infrastructure

Data Centre Design & Build – Tier-certified design, modular/prefabricated data centre supply, and turnkey EPC delivery tailored for high-density AI workloads.

Electrical, Mechanical & HVAC – MV/LV power distribution, energy auditing, CFD simulation, and advanced cooling system integration (liquid and hybrid).

Project & Facility Management – End-to-end project delivery, asset lifecycle planning, and 24/7 operational support to ensure reliability at scale.

Industrial Automation & IT – PLC/SCADA configuration, industrial cybersecurity, and DCIM integration for intelligent infrastructure management.

With deep expertise across data centres, industrial manufacturing, and critical infrastructure, eCanet is your local partner for scalable, efficient, and future-ready colocation facilities.

Ready to make your facility AI-ready?

Contact us today to discuss how we can power and cool your next AI-ready deployment.

Disclaimer: This blog references insights from the Vertiv white paper “Transforming Colocation Facilities to Meet Demand for AI Compute”. For the full technical specifications and case studies, please contact us to speak with a specialist.