HPC vs. AI: Same Roots, Different Branches (And Why Your Data Centre Needs to Know the Difference)

For years, High-Performance Computing (HPC) was the undisputed king of the data center. It simulated exploding stars, predicted hurricane paths, and modeled drug molecules. Then came Artificial Intelligence (AI), which took the same raw hardware and started teaching it to paint, write, and drive cars.

Is AI just the new HPC? Not exactly.

While they share a common ancestry in parallel computing, HPC and AI have diverged significantly in architecture, technology, and applications. Most critically, they have wildly different appetites for power, cooling, and network bandwidth. If you are planning a next-gen cluster, confusing the two could cost you millions in infrastructure.

Let’s break down the DNA of these two computing giants.

The Core Similarity: The Need for Speed (in Parallel)

Before we dive into differences, let’s address the overlap. Both HPC and AI are obsessed with parallel processing.

The "Divide and Conquer" approach: Neither a single CPU core nor a cheap GPU can solve these problems. Both paradigms break massive workloads into thousands of smaller pieces to be solved simultaneously.

Accelerators are king: Both fields rely almost exclusively on GPUs (or specialized accelerators like TPUs) paired with CPUs.

The Cluster is the Computer: Whether you are running a weather simulation or training GPT-5, you need a fleet of nodes connected by a high-speed fabric.

But this is where the roads fork.

The Architecture & Technology Divide

HPC: The Precision Mathematician

HPC is deterministic. It is physics. You give it an equation (e.g., fluid dynamics), and it calculates the exact answer.

Precision is Everything: HPC lives in Double Precision (FP64). When simulating a nuclear blast, rounding errors are not acceptable. HPC chips need insane FP64 performance.

The Interconnect: HPC relies on InfiniBand (or Slingshot) with extremely low latency (sub-microsecond). These simulations require the nodes to "sync up" constantly. If one node lags by 1 millisecond, the entire 100,000-node job stalls.

Memory Bandwidth: Consistent, predictable access to memory is crucial for structured data (grids, matrices).

AI: The Stochastic Statistician

AI is probabilistic. It is pattern matching. You give it a trillion words, and it guesses the next one.

Lower Precision is Faster: AI training discovered it doesn't need perfect math. It thrives on Mixed Precision (FP16, BF16, INT8) . By using smaller numbers, AI chips move data twice as fast as HPC chips for the same power draw.

The Interconnect (Scale vs. Speed): While AI needs speed, it needs scalability more. Technologies like NVLink (NVIDIA) and Scale-Out fabrics are designed to link 8 GPUs together into a single "giant brain" so they can all share one massive model.

Sparsity: AI models are "sparse" (lots of zeros). Modern AI chips have special cores (e.g., Tensor Cores) that skip multiplying by zero, whereas HPC does the math regardless.

The Analogy:

HPC is like a footy team running a set play from a stoppage. Every player has a fixed position and task – ruck taps to rover, rover handballs to midfielder, midfielder kicks long. Anyone goes off‑script and the play collapses. That’s HPC: precise, synchronised, and unforgiving.

AI training is like a pack of kids chasing a ball on a windy oval. No formation, no planned handballs – just a chaotic scrum of kicks and scrambles. But after enough fumbles, the ball ends up near the goals. That’s AI training: messy, unpredictable, but surprisingly effective.

AI inference is like the full‑forward who takes a mark. Ball comes in, he grabs it, kicks for goal – all in under a second. No thinking, no huddle, just instant reaction from practice. That’s inference: lightning‑fast, automatic, and over before you blink.

The Applications:

HPC runs simulations and physics‑based calculations like weather forecasting or crash testing, working with structured data (grids and matrices) where every calculation must be exact - a rounding error can ruin a rocket trajectory. AI, by contrast, handles pattern recognition and content generation (chatbots, image creation, recommendations) using unstructured data like text and video, measuring success by whether the answer makes sense to a human rather than by raw speed, and tolerating small numerical errors because “close enough” is fine for guessing the next word or identifying a cat in a photo.

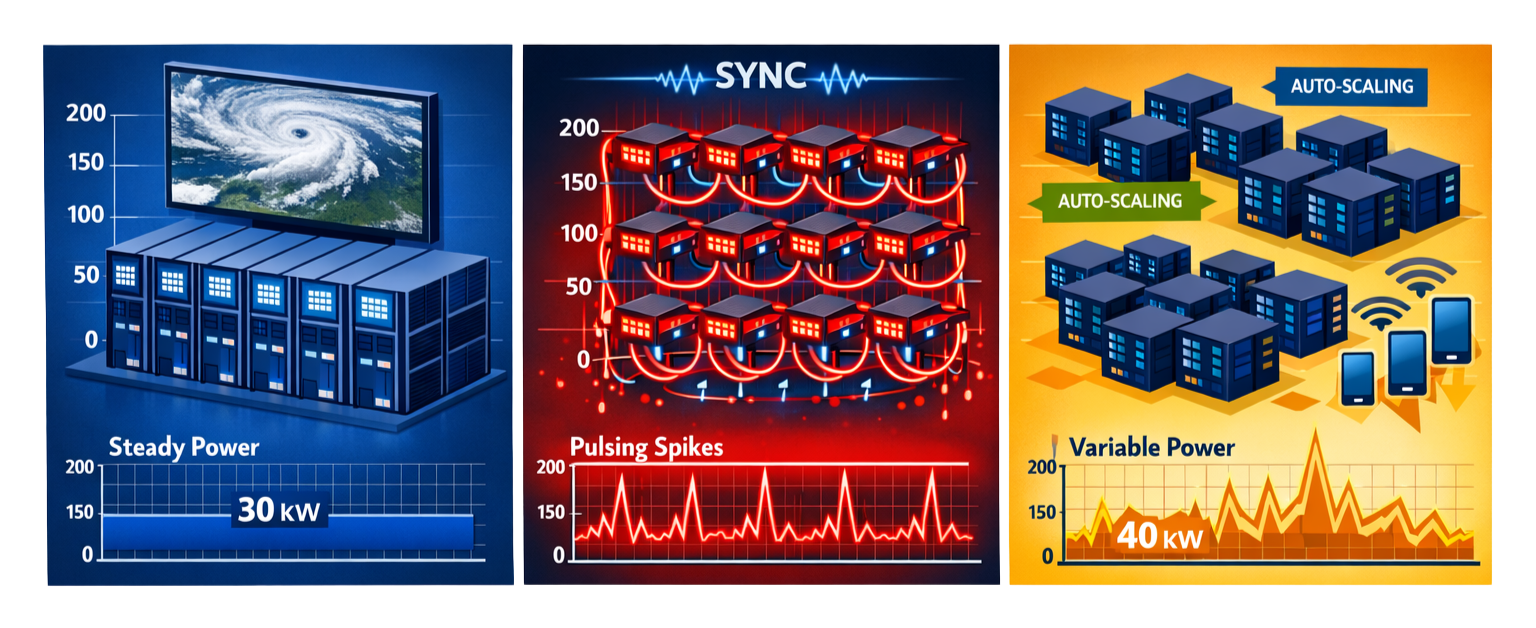

HPC Simulation vs. AI Training vs. AI Inference. (image generated by AI - illustrative purposes only)

The Critical Infrastructure: Power, Cooling, and Networking

Here’s where the infrastructure gap becomes critical. Repurposing an HPC cluster for AI training will likely cause power distribution failures and switch overheating.

Power: The Amp Spike

HPC: Steady, predictable power draw. A traditional CPU+GPU HPC node might pull 400-600W constantly. Loads are uniform.

AI Training: Explosive power cycling. AI training nodes (e.g., 8x H100 GPUs) pull 6 kW to 10 kW+ per rack. Worse, AI has a "sync and burst" pattern. All GPUs draw max power at the exact same moment during gradient synchronization. This causes power "micro-oscillations" that can trip legacy PDU breakers.

AI Inference / Production: Variable, bursty power. A cluster serving ChatGPT might idle at 20% power during low traffic, then spike to 100% for 100 milliseconds, then drop again. This is easier on PDUs but harder on cooling systems that expect steady heat loads.

2. Cooling: Air vs. Liquid

HPC: Historically air-cooled (10-30kW per rack). You can get away with raised floors and fan arrays.

AI Training: Air cooling is impossible for dense clusters. AI training racks routinely hit 80-150kW per rack. Direct-to-Chip Liquid Cooling (DLC) or full immersion is mandatory.

AI Inference / Production: Depends on scale. A rack of 8x L40S GPUs (approx 15-20kW) can still be air-cooled. But at hyperscale (thousands of inference nodes), operators often use liquid cooling for energy efficiency, even though it’s not strictly required.

3. Networking: Latency vs. Throughput

HPC Needs Latency: MPI jobs are chatty. They send thousands of tiny "Hello, are you done yet?" messages per second. Networks optimize for low latency (nanoseconds).

AI Training Needs Bandwidth: Training sends massive weight updates (gigabytes of data) between GPUs every few seconds. AI optimizes for highest possible bandwidth (400G/800G). Latency matters but is secondary.

AI Inference / Production Needs:

Low latency to users: The network from the GPU to the end-user must be fast (<50ms round trip).

High bisection bandwidth for distributed inference (e.g., splitting a model across multiple GPUs).

Load balancing for unpredictable traffic spikes.

The Convergence (The Hybrid Future)

You might notice the lines between HPC and AI are starting to blur.

AI is helping HPC. Machine learning can now solve physics equations faster than traditional methods.

HPC is helping AI. Simulation can generate huge amounts of synthetic data to train robots or self‑driving cars.

Hardware is also changing. NVIDIA’s Grace Hopper chip combines an HPC‑friendly CPU (Grace) with an AI‑optimised GPU (Hopper). They talk to each other over an ultra‑fast internal connection. That one chip can do both jobs: simulate climate at high precision and run AI to predict the next storm.

So the future isn’t HPC or AI. It’s HPC and AI, working together.

The Bottom Line

Choose HPC when you need the right answer.

That means physics, engineering, or any deterministic simulation. You’ll need high precision (FP64) and a network optimised for low latency.

Choose AI Training when you need to build a foundation model.

You’ll need dense clusters of powerful GPUs, mixed precision math, and - most importantly - liquid cooling plus massive network bandwidth.

Choose AI Inference when you need to serve that model to millions of users.

You’ll need auto‑scaling, low‑latency connections to the outside world, and cost‑effective accelerators (not always the most expensive GPUs).

A warning for IT managers

Do not try to retrofit an air‑cooled HPC facility for AI training. You will fail. The power density and cooling needs are fundamentally different.

Plan for liquid cooling from day one - or rent cloud AI instances until you rebuild your data centre.

Inference is easier - it can often run on air‑cooled gear. But don’t assume that will last forever. Models grow, and so do their cooling demands.