Beyond PUE: Why Total Power Usage Effectiveness (TUE) Is the Metric Liquid Cooling Has Been Waiting For

For over a decade, Power Usage Effectiveness (PUE) has been the industry's gold standard for measuring data centre efficiency. The metric has undoubtedly served its purpose well, helping operators reduce waste and optimize infrastructure design. But as the industry pushes toward exascale computing, AI workloads, and increasingly dense racks, a critical question emerges: Is PUE still enough? For facilities leveraging liquid cooling, the answer is increasingly becoming a clear "no."

Enter Total Power Usage Effectiveness (TUE) - a more comprehensive metric that is finally getting the attention it deserves.

The PUE Blind Spot

PUE is calculated by dividing the total facility energy consumption by the energy used solely by IT equipment. The ideal, though unattainable, PUE is 1.0, where all power entering the data centre is used exclusively for computing. But here lies the problem: PUE's simplicity is also its greatest weakness. It does not account for power distribution and cooling losses inside the IT equipment itself.

In traditional air-cooled environments, this blind spot may seem minor. However, as data centers adopt liquid cooling, the limitations become glaring. Liquid cooling influences both the IT power (the denominator) and total facility power (the numerator), making traditional PUE an unreliable gauge of true efficiency. One industry expert has pointedly described PUE as "an incomplete metric" because it considers only the power delivered to the rack, rather than the use to which that power is put- computing, cooling, or other functions.

Furthermore, critics argue that PUE fails to measure what truly matters: the actual useful output of the servers. Nvidia has gone so far as to call PUE an "ineffective efficiency metric for AI workloads" that needs to be replaced, precisely because it does not capture how efficiently servers use energy.

Introducing TUE: The Total Efficiency Picture

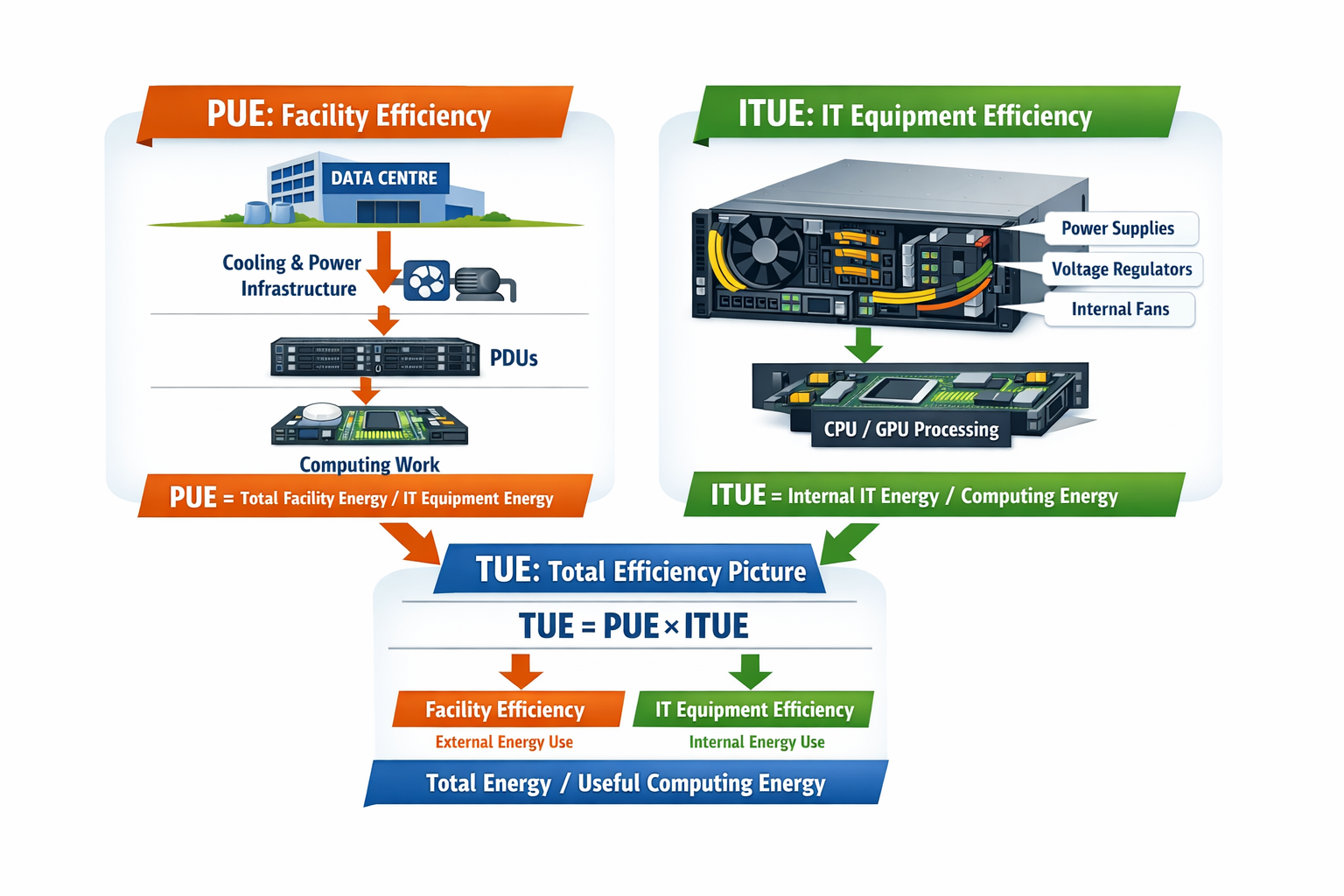

TUE, or Total Power Usage Effectiveness, was proposed to address precisely these shortcomings. Pioneered by researchers at Oak Ridge National Laboratory, TUE is calculated by combining two complementary metrics:

What is TUE?

TUE = PUE × ITUE

Where ITUE (IT-Power Usage Effectiveness) is a server-specific metric similar to PUE but applied "inside" the IT equipment itself. By multiplying PUE (facility-level efficiency) with ITUE (server-level efficiency), TUE provides a ratio of total energy - both external support energy and internal support energy - to the specific energy actually used for computation.

Put simply: while PUE tells you how efficiently your facility delivers power to IT equipment, TUE tells you how efficiently that power is used to do actual computing work. It accounts for losses from power distribution units, voltage regulators, fans, pumps, and cooling subsystems that reside within the IT equipment itself - losses that PUE completely ignores.

What Is a “Good” TUE Value? Comparing TUE to PUE Benchmarks

Because the industry has decades of familiarity with PUE, it's natural to ask: What TUE number should I aim for? The answer requires a shift in perspective, especially when looking at the most recent industry data.

For PUE, the Uptime Institute's 2025 Global Data Center Survey reveals an industry-wide weighted average PUE of 1.54, a figure that has remained virtually unchanged for the sixth consecutive year. This stagnation, largely due to legacy infrastructure and cooling limitations, underscores the challenge of driving efficiency with traditional metrics. The industry broadly considers:

< 1.2 - Excellent (typically achieved with free cooling or advanced liquid cooling)

1.2 - 1.5 - Good to average (common in modern air‑cooled facilities)

> 1.5 - Poor (indicating significant facility‑level inefficiency)

For TUE, because it includes internal IT losses (ITUE), a “good” TUE will always be higher than the facility’s PUE. No data centre can achieve a TUE of 1.0, since even the most efficient server must consume some power for onboard voltage regulation, memory refresh, and internal cooling.

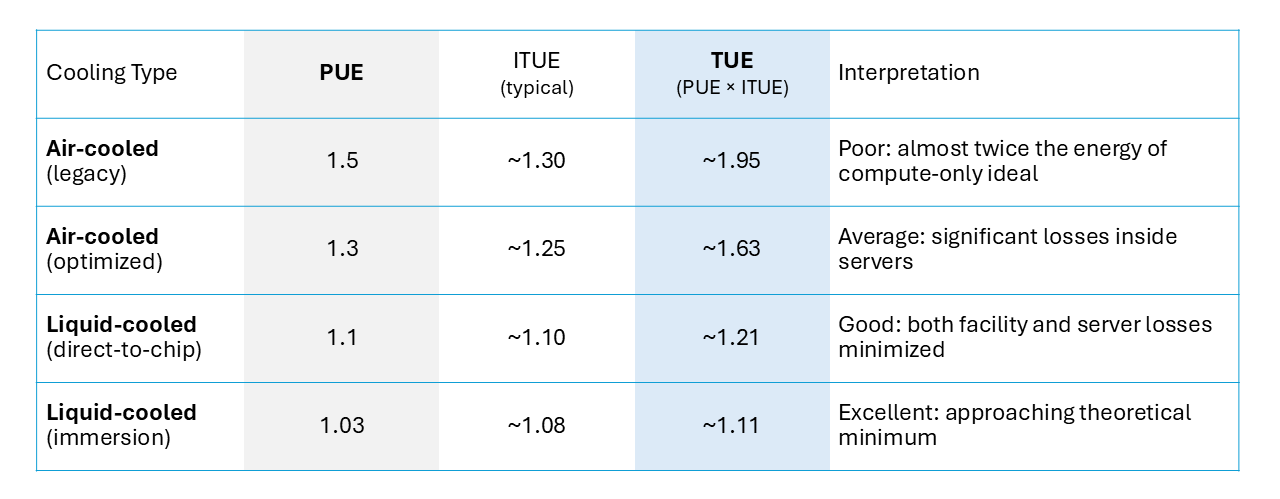

To understand realistic TUE targets, consider two typical scenarios:

Key takeaway: A good TUE for liquid‑cooled data centers falls in the range of 1.10 - 1.25. For air‑cooled legacy facilities, TUE values above 1.6 are common. More importantly, TUE reveals efficiency differences that PUE obscures.

Two data centers could both report a PUE of 1.2, but one might have an ITUE of 1.25 (TUE = 1.50) while another achieves ITUE 1.10 (TUE = 1.32). The second facility is doing substantially more useful computation per total watt - a distinction PUE alone cannot capture.

Why TUE Matters for Liquid Cooling

Liquid cooling is rapidly becoming the standard for modern data centres. With rack densities regularly exceeding 30kW, traditional air cooling systems are struggling to keep up. Liquid cooling offers up to 15% better TUE and enables far more precise control over energy consumption and thermal output.

But here is the critical insight: accurately measuring the efficiency gains from liquid cooling requires TUE.

A team of specialists from NVIDIA and Vertiv conducted the first major analysis of liquid cooling's impact on data centre efficiency and concluded that PUE is not a good measure of data center liquid cooling efficiency. Instead, alternative metrics such as TUE proved more helpful in guiding design decisions related to introducing liquid cooling into air-cooled environments. Studies have confirmed that TUE reveals considerable efficiency gains across all liquid-based cooling systems compared to traditional air cooling.

Why is PUE inadequate for liquid cooling assessment? The reason lies in the counterintuitive behavior of the metric: improvements in IT equipment efficiency can actually cause PUE to increase (worsen), even as overall data centre efficiency improves. This paradoxical effect becomes particularly concerning as PUE levels drop below 1.2, or when liquid cooling becomes significant. TUE corrects for this by capturing efficiency improvements at both the facility and server levels simultaneously.

Beyond Energy: TUE as a Sustainability Metric

Another critical advantage of TUE is its ability to extend beyond pure energy consumption. In its broader interpretation, TUE (Total Usage Effectiveness) is a metric employed to assess the overall efficiency of resource consumption within data centres, extending beyond just power to include water and carbon. This makes TUE particularly valuable for evaluating the holistic environmental footprint of data centers - a pressing concern as energy costs represent 40-60% of operational expenses and water scarcity intensifies globally.

Liquid cooling systems inherently align with this comprehensive view. They can reduce water usage by 31-52% over their entire life cycles compared to traditional air cooling. They eliminate the need for energy-intensive air-moving equipment and enable waste heat reuse - benefits that TUE can properly account for in a way that PUE cannot.

Conclusion: A Better Metric for a Better Future

Despite being introduced over a decade ago, TUE remains largely overlooked by the majority of data centre operators. As one industry observer bluntly put it, the metric has “gone largely unnoticed and unadopted by a large percentage of the industry.” That status quo is no longer acceptable.

It’s time for the industry to move beyond its comfortable reliance on PUE alone. While the search for new efficiency metrics will continue, the immediate priority should be raising awareness of TUE and establishing it as a complementary - or even primary - benchmark for data center energy performance. Doing so would give operators a far more accurate tool to identify waste, justify investments, and drive meaningful improvements where they matter most: at the server level.

For any organization transitioning to liquid cooling - whether direct-to-chip, immersion, or hybrid designs - adopting TUE is not merely a best practice; it is a strategic necessity. Only TUE can capture the full efficiency picture, from the facility’s chiller plant down to the voltage regulators on a single motherboard. With that holistic view, operators can make smarter decisions, optimize total cost of ownership, and credibly report progress toward sustainability targets.

PUE has served the industry well, but its limitations have become increasingly apparent in the era of liquid cooling and AI‑driven compute density. Total Power Usage Effectiveness addresses these shortcomings by accounting for efficiency at both the facility and server levels, providing a true measure of how effectively power is converted into computational output.

As data centers continue to evolve, so too must the metrics we use to measure them. TUE is not just a refinement of PUE - it is the comprehensive metric the industry needs to accurately assess, compare, and optimize the efficiency of modern, liquid‑cooled data centers. The question is no longer whether we should adopt TUE, but how quickly we can make it the new standard.